Opportunity space

Mathematics for Safe AI

Safeguarded AI

Backed by £59m, Safeguarded AI sits within the Mathematics for Safe AI opportunity space and aims to demonstrate a world where we can use fleets of AI agents to model and verify critical cyber and cyber-physical systems, radically boosting societal resilience.

Our goal

AI agents can now write software at a pace that far exceeds human engineering capabilities. Our ability to verify whether that software is correct has not kept up. This gap is evident in software engineering today, but it will become equally pressing in a growing number of domains of science, engineering and decision making more broadly.

This programme aims to close that gap, enabling us to safely deploy AI to transform domains where trust, correctness, and fail-safety are essential.

To advance mathematical modelling and formal verification, we are building and testing an open-source assurance toolkit that lets fleets of AI agents produce formally verified artefacts at unprecedented speed and scale.

Our goal is to equip AI-produced code, control systems, and models with quantitative guarantees of correctness and fail-safety. We will demonstrate these capabilities in one or several critical cyber and cyber-physical domains, such as secure software and hardware, energy grid balancing, supply chain management, and clinical trial design.

If we succeed, we will be able to use AI safely in domains that currently require human-in-the-loop oversight, while simultaneously strengthening resilience – moving faster, across larger systems, and with stronger guarantees than human teams alone can provide.

We have updated our thesis (as of May 2026) to reflect the programme’s sharper focus: expanding the ambition and scope of TA1 ‘Tooling’, and applying these capabilities to high-impact cybersecurity challenges.

This pivot responds to the rapid advance of frontier AI capabilities and positions the programme where it can deliver the greatest impact: building a broader mathematical assurance toolkit and demonstrating its value in cybersecurity. You can access the original thesis in our programme updates below.

Funding open until 1 July 2026

As part of Technical Area 2, this funding call pursues the question: given the advances in AI and formal methods, what are the most ambitious, security-critical systems we can verify today?

To answer this question we are looking to fund teams that fall into one of two tracks. Track 1, building and verifying security-critical components, and Track 2, adversarial evaluation.

Technical areas

This programme is split into two technical areas (TAs), each with its own distinct objectives.

Tooling

We can build an open-source mathematical assurance toolkit that lets fleets of AI agents produce formally verified artifacts at unprecedented speed and scale.

Applications

AI-enabled formal methods can deliver high-impact efficiency and security gains when applied to real-world cybersecurity challenges.

Meet the programme team

Our Programme Directors are supported by a core team that provides a blend of operational coordination and highly specialised technical expertise.

Nora Ammann

Programme Director

Nora has spent nearly a decade working on making transformative AI go well. Her work spans AI assurance – hardware guarantees, formal verification and the coordination needed for safety claims between untrusting parties. She previously founded Principles of Intelligence, bringing together AI researchers, neuroscientists, and physicists to study the foundations of intelligent behaviour.

Maddy McInerney

Programme Specialist

Maddy joined ARIA from a background in scientific operations. Having previously held roles at the Global Pathogen Analysis Service and Public Health England, she brings experience in driving the delivery of high-impact research. She holds a BA from the University of Oxford and supports ARIA as an Operating Partner from Pace.

Dom Cowderoy

Programme Support

Dom is a project, operations and people specialist with experience in the technology and management consulting sectors. Before joining ARIA, he led talent and operations functions at Pace with prior experience as a resourcing lead at Accenture. He supports ARIA as an Operating Partner from Pace.

David 'davidad' Dalrymple

Technical Advisor

davidad is a software engineer with a multidisciplinary scientific background. He’s spent five years formulating a vision for how mathematical approaches could guarantee reliable and trustworthy AI. Before joining ARIA, davidad co-invented the top-40 cryptocurrency Filecoin and worked as a Senior Software Engineer at Twitter.

Creator spotlight: Improving the biopharmaceutical supply chain

Pharmaceutical supply chains are a cyber-physical operating context where AI could unlock transformative improvements, but safe deployment is critical. The SAILS (Safeguarded AI for Logistics and Supply Chain Management) team is researching how AI can make pharmaceutical supply chains more resilient and effective.

Led by Dei Vilkinsons and Ciaran Morinan, CEO and CTO of start-up HASH, they conducted interviews with more than 80 biopharmaceutical supply chain professionals, built a library and mathematical models of supply chain processes, and identified and validated the most promising applications of Safeguarded AI. They are actively working with industrial partners to build, test, and scale trusted and valued AI solutions that can reduce costs and deliver more drugs on time.

- Read the updated programme thesis

We have updated our thesis to reflect the programme’s sharper focus: expanding the ambition and scope of TA1 ‘Tooling’, and applying these capabilities to high-impact cybersecurity challenges.

This pivot responds to the rapid advance of frontier AI capabilities and positions the programme where it can deliver the greatest impact: building a broader mathematical assurance toolkit and demonstrating its value in cybersecurity.

- Watch now

After launching the Safeguarded AI programme and establishing its technical foundations, David ‘davidad’ Dalrymple has decided to transition to a new role as Technical Advisor. We are delighted to announce that Nora Ammann – who has helped run the programme as Technical Specialist since before it began - is stepping into the role of Programme Director. Nora has effectively been running Safeguarded AI alongside davidad. She has deep technical context, established relationships with our Creators, and a clear vision for the programme's next phase.

There are no changes to TA1 Creator contracts, funding, or objectives. Work on the toolsuite continues as planned. The core mission – building a mathematical assurance toolkit for AI – remains the same. An updated thesis will be published to reflect the programme’s shift toward application, with initial efforts likely focusing on cybersecurity and microelectronics.

Alongside this shift, the programme will mature the toolsuite into open, usable infrastructure, publish an updated thesis, and expand the team, including hiring a new Technical Specialist. We will also continue to explore opportunities to extend the programme’s scope over time.

Programme progress and ambition

Nora and davidad sat down with our CEO, Kathleen Fisher, to reflect on the programme’s progress under davidad’s leadership and its ambitions as it transitions to the next phase.

Late last year, we provided an update that we were redirecting our efforts away from Technical Area 2 (TA2) as originally planned and doubling down on expanding the ambition and scope of Technical Area 1 (TA1). Following this, we’ve been reviewing how the rest of the programme can best meet this new objective. After careful consideration, we have decided to shift the primary focus of TA3 towards applications in cybersecurity.

Why we are pivoting

When we launched TA3, our objective was to deploy AI solutions in real-world cyber-physical use cases while providing quantitative safety guarantees, to demonstrate a deployment API that would greatly reduce AI risks versus the general-purpose chat API. Since then, however, frontier AI capabilities have advanced at a pace that has exceeded our original projections, falsifying the hypothesis that high-risk dual-use AI might not be made openly available.

Given this acceleration, we believe we can deliver the greatest impact by:- Broadening the TA1 toolkit – Expanding and strengthening the core technical safety tools currently under development.

- Prioritising cybersecurity – Concentrating our demonstration efforts where the need for robust, guaranteed AI tooling is both most acute and most time-sensitive.

This shift ensures that TA3 remains aligned with the evolving risk landscape and focused on the areas where ARIA can add the most value.

What this means for current projects

- TA3 Phase 2 Solicitation – We will not be proceeding with the planned Phase 2 call for cyber-physical applications.

- Current TA3 Phase 1 Projects – All existing TA3 Phase 1 projects will continue through to their scheduled completion.

- Potential Extensions – We are considering extensions for a small number of TA3 Phase 1 teams that have demonstrated exceptional progress. We will contact those teams directly in due course.

- Learn more

While our conviction in the vision for the Safeguarded AI programme remains unchanged, the pace of frontier AI progress has fundamentally altered our path – instead of investing in specialised AI systems that can use our tools, it will be more catalytic to broaden the scope and power of the TA1 toolkit itself, making it a foundational component for the next generation of AI.

Featured insights

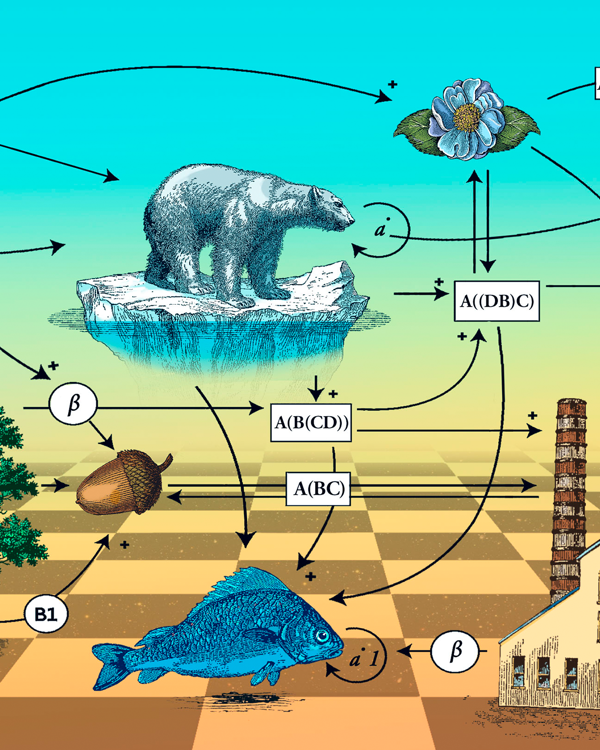

Can the most abstract math make the world a better place?

Quanta Magazine

Natalie Wolchover explores whether applied category theory can be “green” math.