The Scaling Inference Lab

The Scaling Inference Lab is a testbed for AI hardware technologies, prioritising rapid iteration, open collaboration, and long-term sustainability.

Backed by £50m, the Lab is set up to address a key bottleneck for startups and research groups developing innovative AI hardware: the lack of an open platform to showcase their technologies due to the dominance of large, closed systems in today’s AI hardware market.

Delivered by CommonAI CIC, the Lab will create real and deployable rack-level Al systems, designed to increase the ability of startups to insert new technologies with the goal to rapidly reduce cost of large AI systems. A new rack will be built every 6 months and serve as a 'structured pilot', incorporating one unproven technology (accelerators, memory, interconnects, etc.), and bringing it up in a system such that actual end users can run Al models on it. For each build, we should be able to compare the full systems-level performance of a particular technology to existing commercial offerings.

"The majority of industry currently focuses on pushing the bounds of bleeding edge performance through tighter integration of proprietary (and largely homogeneous) systems. We believe costs can be driven down at a much faster rate by pursuing an alternate path: placing emphasis on low-power compute coupled with novel networking and software innovations, all facilitated with open-source interfaces and rapid iteration."

Suraj Bramhavar

Programme Director

Inaugural partners

Partners in the Lab provide services, technology, or expertise either for individual clusters or across the full initiative.

CommonAI

CommonAI CIC will lead the delivery of the Scaling Inference Lab, both operationally and through a dedicated research and engineering effort. The CommonAI model and its key contributors have a proven track record of moving ideas from open-source research to global scale. This was exemplified by the openTitan project which was incubated by the lowRISC CIC and is being deployed globally by Google and others.

Website | LinkedIn

Callosum

Callosum's technology orchestrates diverse AI systems across heterogeneous chips, enabling multi-agent inference architectures that no single-chip platform can support. As part of the Lab, Callosum will establish a new inference scaling law, demonstrating that heterogeneous orchestration delivers dramatically better cost-performance gains than single-model approaches.

Website | LinkedIn

Sequrity.AI

Sequrity.AI are reimagining sustainable hardware solutions for AI workloads to ensure a secure and reliable end-to-end inference supply chain. As part of the Lab, they will provide software solutions to optimise our initial clusters, and help ensure these clusters are benchmarked against the best commercial offerings.

Website | LinkedIn

Oriole Networks

We are in advanced talks to partner with Oriole, who are pioneering a new class of AI infrastructure powered entirely by photonics, replacing today’s electronic bottlenecks with nanosecond‑speed optical circuits. Within the Lab, Oriole will stand up an AI cluster on the PRISM fabric to validate performance and integration. This pilot will be the foundation for a radically new architecture in which the entire data centre functions as a single, tightly coupled accelerator, unconstrained by topology or switching limits.

Website | LinkedIn

Become a partner

We are now inviting expressions of interest from organisations who would like to participate in the development of future racks.

Each cluster will be built in partnership with at least one external organisation, with ARIA funding system integration and testing. Participating partners will be expected to provide matched technical and/or financial contributions for technology development.

If you would like to explore participation, express your interest by completing the form below. The selection process will be run by CommonAI CIC.

Support from the ecosystem

Featured insights

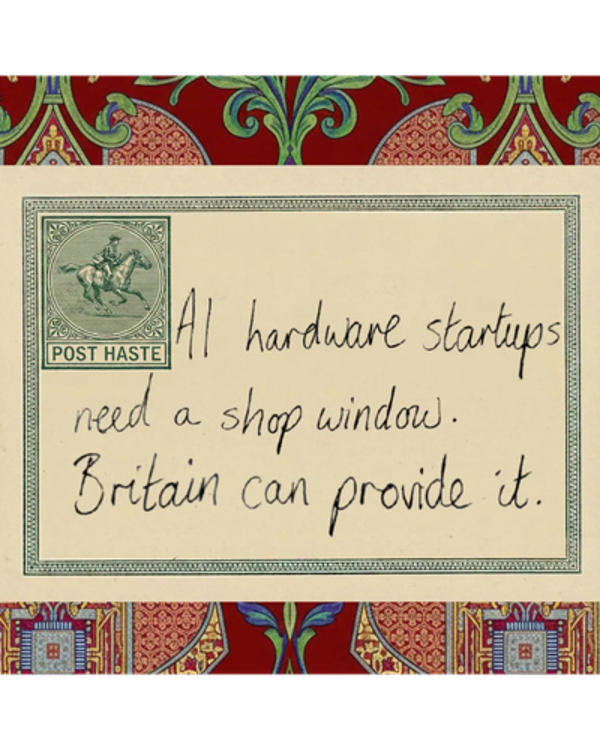

AI hardware startups need a shop window. Britain can provide it.

Centre for British Progress

Why this country is uniquely positioned to deliver the Scaling Inference Lab.

Sign up for updates

Stay up-to-date on our opportunity spaces and programmes, be the first to know about our funding calls and get the latest news from ARIA.